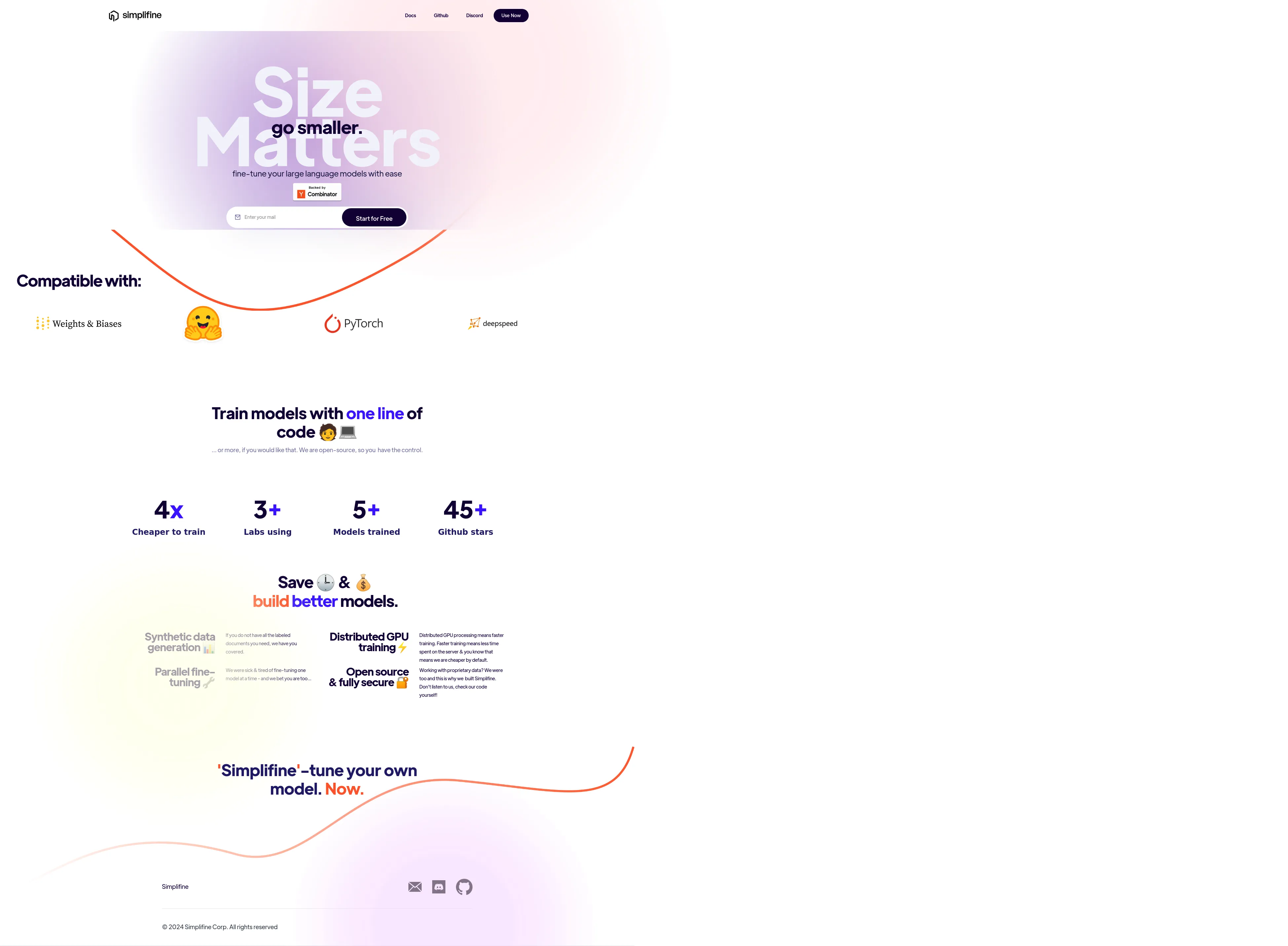

Our library includes features like: Seamless on-device to cloud training handoff AI-assisted hyperparameter selection Auto parallelism configurations Synthetic dataset generation In-built evaluations With support for various LLM models and auto-optimizations for hardware, Simplifine reduces the steep learning curve, allowing users to focus on innovation rather than infrastructure management. Whether you're moving from local to multi-GPU instances or optimizing your resources, Simplifine helps you get the most out of your LLM training efficiently and cost-effectively.

Simplifine offers competitive pricing, ensuring cost-effective model training. Contact us for detailed pricing plans tailored to your needs.

Simplifine is developed by a dedicated team of AI enthusiasts and experts committed to making model training accessible and efficient. Join our community and contribute to the future of AI development.

Fast, Scalable Infrastructure for Fine-tuning and Inferencing LLMs.

Save, migrate and resume compute jobs in real-time

High Performance Computing Built for the Cloud

The GPU Cloud Marketplace

Database for AI

The DevOps Platform for On-Device AI/ML

Run serverless GPUs on your own cloud

Run local Jupyter notebooks on your cloud compute

Data-Centric Co-Pilot for Computer Vision Engineers

Match with like-minded professionals for 1:1 conversations

Go from Slack Chaos to Clarity in Minutes

Personalize 1000s of landing pages in under 30 mins

The first LLM for document parsing with accuracy and speed

AI Assistants for SaaS professionals

AI-powered phone call app with live translation

Delightful AI-powered interactive demos—now loginless

AI Motion Graphics Copilot

Pop confetti to get rid of stress & anxiety, 100% AI-free

Smooth payments for SaaS